SECRETS OF CINELERRA

SECRETS OF CINELERRA

Version 10.1

by Adam Williams

Copyright © 2011-2026

Sections from https://cinelerra-gg.org/download/CinelerraGG_Manual/Contents.html

1 SHORT CONTENTS

Table of Contents

2 ABOUT CINELERRA

It’s important to remember where it came from to differentiate what’s

really needed from bloat.

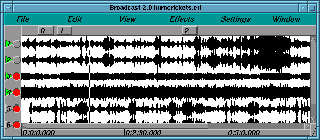

BOBCAST/BROADCAST 1.0

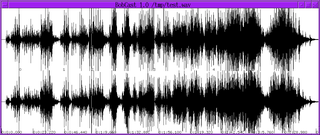

The 1st attempt at editing media was written in 1996. It just loaded a

single WAV file or edit list from the command line & shifted bits of it

around in a single window. Then it could render a new wav file or save

the edit list. It used bare X11 calls & no toolkit.

The typical source in those days was 1 file containing a complete

recording session. Editing just needed to move bits of the 1 file

around.

Its big magic tricks wer handling files up to 2 gigabytes with only 64

megs of RAM & non destructive editing. That was a feature normally only

accessible to the highest end professional audio houses in those days.

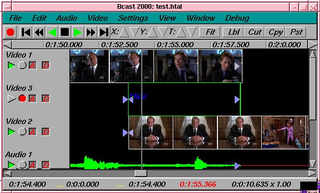

BROADCAST 2.1

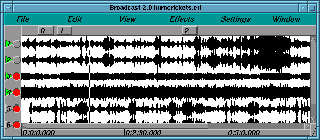

In 1997 Broadcast 1.0 was replaced by Broadcast 2.0. This time the

window had a menubar, patchbay, console, and transport control.

Broadcast 2.0 still only handled audio but this time it handled

unlimited tracks, and it could perform effects on audio and save the

resulting waveform to disk. More notably a few effects could be

performed as the audio was playing back, in realtime. A user could mix

unlimited numbers of tracks, adjust fade, pan, and EQ, and hear the

result instantly. Amazingly this real time tweaking is still

unavailable on most audio programs.

BROADCAST 2000

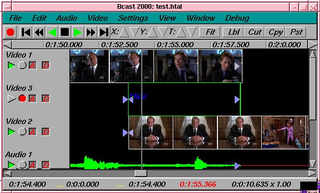

But Broadcast 2.0 still didn’t handle video and it wasn’t very graceful

at audio either. In 1999 video broke into the story with Broadcast

2000. This iteration of the Broadcast series could do wonders with

audio and offered a pretty good video feature set. It could edit video

files up to 64 terabytes. It could do everything Broadcast 2.1 did

with audio except now all effects for video and audio could be chained

and performed on the fly, with instant feedback as a user tweeked

parameters during playback. Broadcast 2000 made it very easy to do a

lot of processing and editing on video and audio that would otherwise

involve many hours setting up command line sequences and writing to

disk. For a time it seemed as if the original dream of immersive movie

making for everyone regardless of income level had arrived.

Later on Broadcast 2000 began to come short. Its audio and video was

graceful if you knew how to use it efficiently, but quality issues and

new user interface techniques were emerging. Broadcast 2000 kept the

audio interface from its ancestors, which didn’t apply well to video.

Users likewise were maturing. No longer was it sufficient to just edit

video on a UNIX box. Most users expected on UNIX the same thing they

got in Win or Mac. A lot of users complained about the lack of support &

wanted it taken down if it wasn’t supported. Then a lot of users

complained that it was taken down.

CINELERRA

In mid 2000, a Broadcast 2000 replacement began. The Broadcast name was

officially retired from the series and the software would now be called

Cinelerra. Cinelerra would begin to emulate some of the features found

in Win and Mac software while not attempting to become a clone. Helas,

not strictly adhering to standard features has been the biggest source

of contention.

As with Broadcast 2000, most users oppose this program being distributed

without full support, full patch debugging & integration, adherence to

standard features, & want it taken down. Whether someone should be

allowed to do their own thing is an ages old question. Other projects

got ahead of the support problem from the beginning by raising enough

funds for full time employment, but doing so requires adhering to

accepted designs & features they’re not always fond of.

LINUX DERIVATIVES

Linux became more and more fragmented after corporations adopted it.

Threading once worked the same on all derivatives. Today there are more

threading models than days of the week. We try to focus on 1 of the

most popular Linux derivatives at any moment. The threading model is

ported to that Linux derivative shortly before a release, but Linux

derivatives quickly evolve to new threading models and everything

breaks.

Also, there is no consistent behaviour for sound and video drivers. The

situation with video capture has improved in that modern video sources

can all be mounted like disk drives. The audio capture drivers have

been a bit more reliable.

2.1 ABOUT THIS MANUAL

This is the original manual for Cinelerra. This manual has been copied

and translated into many languages on many websites in varying degrees

of completeness.

Organizing information in the easiest manner for users to find out what

they need to know is sort of like cataloging the internet. They’ve

been trying to get it right for 30 years and will probably keep trying

until the end of time.

There a lot of fragments of documentation scattered throughout the

internet about Cinelerra. This document attempts to combine all the

pieces of information in one piece.

Like the operating system and compiler for a piece of software, the

document writing format is the most important thing in choosing our

document format. We wanted a format which would be readable regardless

of corporate whims and fads. A piece of software which compiles on GCC

and Linux will be usable as long as there are C compilers. Documents

written in Texinfo will be readable as long as there’s a C compiler.

After many years of searching for the perfect documentation format

we’ve arrived at TexInfo. This format can be converted to HTML,

printed, automatically indexed, but most importantly is not bound to

any commercial word processor.

There are few screenshots in this manual. Screenshots become obsolete

quickly and as a result get confusing. What looks one way in a

screenshot will always look different in the real program because the

real program and the manual are always evolving, never perfectly

synchronized. It is true that manuals should have screenshots, but our

objective in omitting screenshots is to keep the software costs minimal

so you don’t have to pay for it. That includes additional labor to

synchronize the manual with the software.

In addition to telling you the basic editing features of Cinelerra this

manual covers tricks that won’t be described anywhere else. We’re

going to try to come up with certain things you can do with Cinelerra

that you wouldn’t think of on your own.

3 INSTALLATION

The Cinelerra package contains Cinelerra and most of the libraries

needed to run it. We try to include all the dependencies because of

the difficulty in tracking down the right versions. Also included are

some utilities for handling files. The following are the general

contents of all Cinelerra packages.

-

Cinelerra executable - cinelerra

-

Cinelerra plugins - These all end in .plugin

-

doc - The build copy of this documentation

-

fonts - Font files for the titler

-

ffmpeg - A version of ffmpeg for use with the command line encoding

feature.

-

mpeg3cat - Utility for reading an MPEG file from a certain standard

and outputting it to stdout.

-

mpeg3toc, mpeg3cat, mpeg3dump, mpeg3peek - Utilities for reading MPEG files.

-

previews - Project files which are loaded as transition previews.

3.1 INSTALLING A PRECOMPILED BINARY

Cinelerra is easiest installed by downloading a precompiled binary and

running

tar xvf cinelerra*.tar.xz

cd cinelerra*

./cinelerra.sh

It should have all the hardest dependencies already taken care of.

This doesn’t always work because there are many versions of libc, each

incompatible with the others. This is the biggest reason to compile

from scratch.

3.2 COMPILING FROM SCRATCH

It should be noted that the compiler used in building Cinelerra

binaries is the free GNU compiler and very conservative optimization

flags. Alternative optimization flags and compilers produce varying

results. Compiling the source is hard and there’s no warranty if the

source code fails to compile, but the method for compiling starts by

downloading the source code and decompressing.

The compilation is verified on a vanilla Ubuntu installation of a

certain version. It has a lot of dependencies. The README file

contains more info on the dependencies.

tar xvf cinelerra*.tar.xz

Enter the main directory

Then run

This checks the build environment for the right tools and should give

you an error if a tool is missing. Once that succeeds run

The make procedure should run through all the directories and put

binaries in the i686 or x86_64 directories.

A lot of libraries are included in thirdparty directories to get the

version numbers right.

Once finished, run

to install the binaries in the bin/ sub directory. It doesn’t install

anything in the system folders.

3.3 RUNNING CINELERRA

The simplest way to run Cinelerra is by going into bin/ & running

This command hides a much more capable command line interface. Run

cinelerra -h to get a listing of command line options. The use of

these options is described in several sections.

For rendering from the command line See RENDERING FILES.

4 CONFIGURATION

Because of the variety of uses, Cinelerra cannot be run optimally

without some intimate configuration for your specific needs. Very few

parameters are adjustable at compile time. Runtime configuration is

the only option for most configuration because of the multitude of

parameters.

Here we discuss not only the configuration options but which of the

different API’s in Linux are supported.

Go to settings->preferences and to see the options.

4.1 ENVIRONMENT VARIABLES

In UNIX derivatives, environment variables are global variables in the

shell which all applications can read. They are set with a command

like set VARIABLE=value. All the environment variables can be

viewed with a command like env. Cinelerra recognizes the following

environment variables:

- LADSPA_PATH - If you want to use LADSPA plugins, this must be

defined: a colon separated list of directories to search for LADSPA

plugins. These are not native Cinelerra plugins. See LADSPA EFFECTS.

4.2 AUDIO DRIVERS

The audio drivers are used for both recording and playback to get data

to and from the hardware. Since the same drivers are used for both

recording and playback, their functionality is described here in a

separate section. The most tested driver is ALSA.

4.2.1 COMMON SOUND DRIVER ATTRIBUTES

- DEVICE PATH

Usually a file in the /dev/ directory which controls the

device.

-

BITS

The number of bits of precision Cinelerra should set the device for.

This sometimes has a figuritive meaning. Some sound drivers need to be

set to 32 bits to perform 24 bit playback and won’t play anything when

set to 24 bits. Some sound drivers need to be set to 24 bits for 24

bit playback.

4.2.2 OSS

This was the first Linux sound driver. It had an open source

implementation and a commercial implementation with more sound cards

supported. It was the standard sound driver up to linux 2.4. It still

is the only sound driver which an i386 binary can use when running on

an x86_64 system.

4.2.3 OSS Envy24

The commercial version of OSS had a variant for 24 bit 96 Khz

soundcards. This variant required significant changes to the way the

sound drivers were used, which is what the OSS Envy24 variant is for.

4.2.4 ALSA

ALSA is the most common sound driver in Linux. It supports the most

sound cards now. For many years, the ALSA API was constantly changing

but it’s pretty stable today.

4.2.5 ESOUND

ESOUND was a sound server that sat on top of OSS. It was written for a

window manager called Enlightenment. It supported a limited number of

bits and had high latency compared to modern times but multiplexed

multiple audio sources. It’s unknown whether it still works.

4.2.6 RAW 1394

The first interface between linux software and firewire camcorders.

This was the least reliable way to play audio to a camcorder. It

consisted of a library on top of the kernel commands.

4.2.7 DV 1394

The second rewrite of DV camcorder support in Linux. This was the most

reliable way to play audio to a camcorder. This consisted of direct

kernel commands.

4.2.8 IEC 61883

The third rewrite of DV camcorder support in Linux. This is a library

on top of RAW 1394 which is a library on top of the kernel commands.

It’s less reliable than DV 1394 but more reliable than RAW 1394. The

next rewrite ought to fix that.

4.3 VIDEO DRIVERS

The audio drivers are used for both recording and playback to get data

to and from the hardware. Since the same drivers are used for both

recording and playback, their functionality is described here in a

separate section.

4.3.1 COMMON VIDEO DRIVER ATTRIBUTES

-

DISPLAY

The is intended for dual monitor

displays. Depending on the value of Display, the Compositor window

will appear on a different monitor from the rest of the windows.

-

DEVICE PATH

Usually a file in the /dev/ directory

which controls the device.

-

SWAP FIELDS

Make the even lines odd and the odd lines even

when sending to the device. On an NTSC or 1080i monitor the fields may

need to be swapped to prevent jittery motion.

-

OUTPUT CHANNEL

Devices with multiple outputs may need a

specific connector to send video on.

-

PORT

The IEEE1394 standard specifies something known as the

port. This is probably the firewire card number in the system

to use.

-

CHANNEL

The IEEE1394 standard specifies something known as the

channel. For DV cameras it’s always 63.

4.3.2 X11

This was the first method of video playback on any UNIX system, valid

all the way until 1999. It just writes the RGB triplet for each pixel

directly to the window. It’s the slowest playback method. It’s still

useful as a fallback for incompatible graphics hardware.

4.3.3 X11-XV

This was the second big method of video playback in UNIX starting in

1999. It converts YUV to RGB in hardware with scaling. It was the

preferred playback method for many years but can’t handle large frame

sizes. The maximum video size for XV is hardware dependent & usually

1920x1080.

4.3.4 X11-OPENGL

The most powerful video playback method is OpenGL but support is

completely hardware dependent. Although the OpenGL API was originally

supposed to be hardware independent, it only ended up getting supported

by NVidia with other brands moving to OpenCL or Vulcan.

With this driver, most effects are done in the GPU. OpenGL allows video

sizes up to the maximum texture size, which is usually larger than

4096x4096, depending on the graphics driver.

Today, the rendering as well as the playback can be done in OpenGL. It

relies on PBuffers and shaders to do video rendering. The graphics

driver must support OpenGL 2 and Cinelerra needs to be explicitly

compiled with OpenGL 2 support. This requires compiling it on a system

with the OpenGL 2 headers.

PBuffers are known to be fickle. If the graphics card doesn’t have

enough memory or doesn’t have the right visuals, PBuffers won’t work.

Try seeking several frames or restarting Cinelerra if OpenGL doesn’t

work.

X11-OpenGL processes everything in floating point. Although the

difference between YUV and RGB is retained, there is no speed difference

when using alpha or floating point color models.

The scaling equation in Preferences is ignored by OpenGL. OpenGL

always uses linear scaling.

To get the most acceleration, OpenGL-enabled effects must be placed

after software-only effects. All rendering before the last

software-only effect is done in software. The core operations like

camera and projector are of course OpenGL.

4.3.5 BUZ

This is a method for playing motion JPEG-A files directly to a

composite analog signal. It uses a popular hack of the Video4Linux 1

driver from 2000 to decompress JPEG in hardware. Sadly, even though

analog output is largely obsolete, newer drivers have replaced BUZ.

4.3.6 RAW 1394 VIDEO PLAYBACK

The first interface between linux software and firewire camcorders.

This was the least reliable way to play video to a camcorder. It

consisted of a library on top of the kernel commands.

4.3.7 DV 1394 VIDEO PLAYBACK

The second rewrite of DV camcorder support in Linux. This was the most

reliable way to play video to a camcorder. This consisted of direct

kernel commands.

4.3.8 IEC 61883 VIDEO PLAYBACK

The third rewrite of DV camcorder support in Linux. This is a library

on top of RAW 1394 which is a library on top of the kernel commands.

It’s less reliable than DV 1394 but more reliable than RAW 1394. This

was the last attempt at any kind of dedicated video hardware before

everything went to internet distribution.

4.4 PLAYBACK

4.4.1 AUDIO OUT

These determine what happens when you play sound from the timeline.

- SAMPLES TO SEND TO CONSOLE:

For playing audio, small fragments of sound are read from disk and

processed in a virtual console sequentially. A larger value here

causes more latency when you change mixing parameters but gives more

reliable playback.

Some sound drivers don’t allow changing of the console fragment so

latency is unchanged no matter what this value is.

A good way of ensuring high quality playback was to read bigger

fragments from the disk and break them into smaller fragments for the

soundcard. That changed when the virtual console moved from the push

model to the pull model. Since different stages of the rendering

pipeline can change the rate of the incoming data, it would now be real

hard to disconnect size of the console fragments from the size of the

fragments read from disk.

-

AUDIO OFFSET:

The ability to tell the exact playback position on Linux sound drivers

is pretty bad if it’s provided at all. Since this information is

required for proper video synchronization, it has to be accurate. The

AUDIO OFFSET allows users to adjust the position returned by the

sound driver to reflect reality. The audio offset doesn’t affect the

audio playback or rendering at all. It merely changes the

synchronization of video playback.

The easiest way to set the audio offset is to create a timeline with 1

video track and one audio track. Expand the audio track and center the

audio pan. The frame rate should be something over 24fps and the

sampling rate should be over 32000. The frame size should be small

enough for your computer to render it at the full framerate. Highlight

a region of the timeline starting at 10 seconds and ending at 20

seconds. Drop a gradient effect on the video track and configure

it to be clearly visible. Drop a synthesizer effect on the audio

and configure it to be clearly audible.

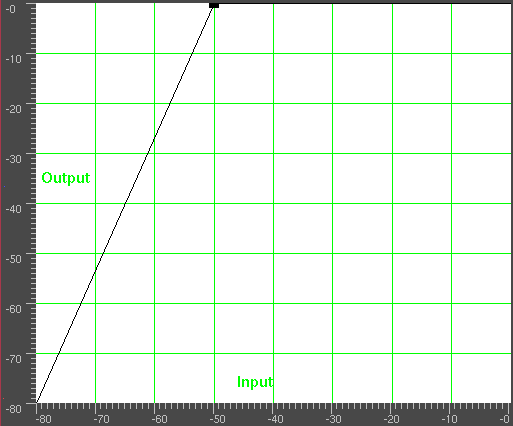

Play the timeline from 0 and watch to see if the gradient effect starts

exactly when the audio starts. If it doesn’t, expand the audio track

and adjust the nudge. If the audio starts ahead of the video, decrease

the nudge value. If the audio starts after the video, increase the

nudge value. Once the tracks play back synchronized, copy the nudge

value to the AUDIO OFFSET value in preferences.

Note: if you change sound drivers or you change the value of USE

SOFTWARE FOR POSITIONING INFORMATION, you’ll need to change the audio

offset because different sound drivers are unequally inaccurate.

-

VIEW FOLLOWS PLAYBACK

Causes the timeline window to scroll when the playback cursor moves.

This can bog down the X Server or cause the timeline window to lock up

for long periods of time while drawing the assetse.

- USE SOFTWARE FOR POSITIONING INFORMATION

Most soundcards and sound drivers don’t give reliable information on

the number of samples the card has played. When playing video you need

this information for synchronization. This option causes the sound

driver to be ignored and a software timer to be used for

synchronization.

- AUDIO PLAYBACK IN REALTIME:

Back in the days when 150Mhz was the maximum, this allowed

uninterrupted playback on heavy loads. It forces the audio playback to

the highest priority in the kernel. Today it’s most useful for

achieving very low latency between console tweeks and soundcard

output. You must be root to get realtime priority.

- AUDIO DRIVER

There are many sound drivers for Linux. This allows selecting one

sound driver and setting parameters specific to it. The sound drivers

and their parameters are described in the sound driver section.

See AUDIO DRIVERS.

4.4.2 VIDEO OUT

These determine how video gets from the timeline to the screen.

The next 2 options are optimizations for straight editing without

compositing. These break most compositing.

- DISABLE MUTED VIDEO TRACKS

Muted tracks are not rendered, regardless of dependencies.

- PLAY ONLY 1 VIDEO TRACK

Only the 1st track with data is rendered, regardless of dependencies.

Because of nested EDLs & random access plugins, projects have to

normally render all the playable tracks to catch all the compositing

scenarios. It can be extremely slow if you’re not doing compositing.

If both options are selected, the project can select between 2 tracks by

toggling just the top mute curve. This is useful for non destructive

multicam editing.

If only DISABLE MUTED VIDEO TRACKS is selected, projects can elect

to disable tracks by just using their mute curves while keeping the

option always selected.

If only PLAY ONLY 1 VIDEO TRACK is selected, users can select the

playable track by stacking edits instead of manipulating mute curves.

This only plays the top track with an edit or plugin at the position.

Full compositing is rarely used enough that these options will probably

be on most of the time. The trick with using mute keyframes instead of

play keyframes is the play patch can be used to solo the track without

changing the project.

- CPU SCALING QUALITY

When video playback involves any kind of scaling or translation in the

CPU, this algorithm is used. This doesn’t affect any other operation

besides scaling. When using OpenGL, it always scales using linear

interpolation.

- LOW

This uses nearest neighbor interpolation when scaling in software.

Produces jagged edges and uneven motion but it can extract higher

resolution when downscaling video in a multiple of pixels. A common

trick is downscaling 4k to 2k using LOW QUALITY.

- HIGH

This applies higher quality interpolation than linear when scaling in

software but results in softer images when scaling a multiple of

pixels. The current algorithm is Lanczos interpolation.

-

PRELOAD BUFFER FOR QUICKTIME

The Quicktime decoder can handle DVD sources better when this is around

10000000. This reduces the amount of seeking required. Unfortunately

when reading high bitrate sources from a hard drive, this tends to slow

it down. For normal use this should be 0.

This option was used heavily before 2005. Since optical disks went

away, it’s been an artifact.

-

DVD SUBTITLE TO DISPLAY

The recommended way to view subtitles is to extract them with programs

like mkvextract, subtomov, & the titler. This option has largely become

an artifact. You’re not supposed to watch pirated movies in Cinelerra &

enhance their crummy quality with it anyway.

DVD IFO files usually contain subtitle tracks. These must be decoded

with by the MPEG decoder. Select Enable subtitles to enable

subtitle decoding. There are usually multiple subtitle tracks starting

from 0. The subtitle track to be decoded for all MPEG streams goes in

the DVD subtitlee to display text box. Go to the asset corresponding

to the MPEG file in the Resources window and right click. Click on

Info. The number of subtitle tracks is given at the bottom.

- INTERPOLATE CR2 IMAGES

Enables interpolation of CR2/CR3 images. Interpolation is required

since a raw image is a bayer pattern. The interpolation uses

libraw/dcraw’s built-in interpolation and is very slow. If this

operation is disabled, the Interpolate Bayer effect can be used

instead for faster but lower quality previewing.

Disabling interpolation can enable higher resolution since the

alternatives don’t have to use a lowpass filter. Disabling

interpolation & scaling down 50% is 1 alternative but it still uses a

lowpass filter. Disabling interpolation & applying Downsample

probably gives the best results. That doesn’t apply any lowpass

filtering. These options require doubling the red & blue levels in

color balance since the green pixels are going to outnumber R & B.

The Interpolate Bayer effect tries to extract more resolution than

downsampling, but has checkerboard artifacts.

- WHITE BALANCE CR2 IMAGES

Enables white balancing for CR2/CR3 images. This has gone from being

mandatory to optional when interpolating as libraries have evolved. It

originally required diabolical hacks to dcraw but seems to work properly

in the current libraw version.

Disabling white balancing is useful for operations involving dark frame

subtraction. The dark frame and the live exposure need to have the same

color matrix.

Beware that when white balancing is on & interpolation is off, the green

pixels still outnumber the R & B so they need a color balance step.

- VIDEO DRIVER

Normally video on the timeline goes to the compositor window during

continuous playback and when the insertion point is repositioned.

Instead of sending video to the Compositor window the video driver can

be set to send video to another output device during continuous

playback. This doesn’t affect where video goes when the insertion

point is repositioned, however.

The video drivers and their parameters are described in the video

driver section. See VIDEO DRIVERS.

4.5 RECORDING

Recording depends on an encoding format for the output file & an input

format from the hardware. For audio, all the input formats can write to

all the file formats. For video, only specific combinations are

supported. It is best to work from the top down, since setting certain

video drivers causes it to force certain file formats.

The parameters here affect what happens in File->Record.... The

intention was to make File->Record... go as fast as possible into

the record monitoring window, without a lengthy dialog to configure the

file format. Instead, the file format for recording is set here and it

is applied to all recordings. The input & file format format are set in

the same place since they depend on each other.

The filename is set in the record window since it can be different for

every recording. It must be set before starting recording & it doesn’t

check for an overwrite.

4.5.1 VIDEO IN

These determine how frames are injested in File->record & in the

live video effect.

-

RECORD DRIVER

There was once a plan to share the video recording

driver with the audio recording driver if the audio and video were

multiplexed in the same stream like DVB, but no use case for this

happened. It takes variable parameters depending on the driver. The

parameters have the same meaning as they do for playback.

Most webcams & USB dongles today use Video4Linux2. For capturing the

screen, you’ll use Screencap.

- VIDEO4LINUX2 DEVICE PATH

For video4linux2, this is usually /dev/video0 or 1 of the /dev/video

files.

- VIDEO4LINUX2 CODEC

This is the input format read from video4linux2. It has to be matched

to the file format & the hardware. To record video, the file format

must obviously support video 1st. Then, the compression setting for the

file has to be what the hardware codec supports.

Webcams, HDMI to USB, analog to USB generally support YUYV & MJPG 1

field. To record YUYV, the file format has to be command line & the

compression preset needs to have YUV 422. YUYV produces higher

quality but lower framerates when resolution is above 720x480.

To record MJPG 1 field, the file format has to be Quicktime & the

compression has to be JPEG.

Keychain cams generally only support MJPG 1 field.

For video4linux2, the frames to buffer in device normally needs to be 4

to get the full framerate. If it’s 2, it’ll only get 1/2 the framerate.

- SCREENCAPTURE

Screencap only takes a Display as a parameter. This can be left blank

to record the local display.

For best results with screencap, the file format should be command

line & the compression preset should have YUV 444.

- FRAMES TO RECORD TO DISK AT A TIME

Frames are recorded in a pipeline. First frames are buffered in the

device. Then they’re read into a larger buffer for writing to disk.

The disk writing is done in a different thread as the device reading.

For certain codecs the disk writing uses multiple processors. This

value determines how many frames are written to disk at a time.

-

FRAMES TO BUFFER IN DEVICE

The number of frames to store in the device before reading. This

determines how much latency there can be in the system before frames are

dropped. It normally has to be 4.

- USE SOFTWARE FOR POSITIONING INFORMATION

Video uses audio for synchronization but most soundcards don’t give accurate position

information. This calculates an estimation of audio position in

software instead of the hardware for synchronization.

-

SYNC DRIVES AUTOMATICALLY

For high bitrate recording the drives may be fast enough to store the

data but Linux may wait several minutes and stall as it writes several

minutes of data at a time. This forces Linux to flush its buffers

every second instead of every few minutes and produce slightly better

realtime behavior.

-

SIZE OF CAPTURED FRAME

This is the size of the frames recorded. It is independent of the

project frame size because most video devices only record a fixed frame

size. If the frame size given here isn’t supported by the device it

might crash Cinelerra.

The aspect ratio for the recording monitor is currently assuming only

square pixels.

In the past, it has been determined by the setting for auto aspect

in Settings->set format. If auto aspect was enabled, the recording

monitor showed square pixels. If auto aspect was disabled, the

recording monitor stretched the captured frame to the aspect ratio in

Settings->set format. The problem with this was it was easier to

detect recording anomalies with square pixels while watching video from

the timeline was easier with stretched pixels.

- FRAME RATE FOR RECORDING

The frame rate recorded is different from the project settings. This

sets the recorded frame rate.

4.5.2 AUDIO IN

These determine what happens when you record audio.

-

RECORD DRIVER

This is used for recording audio in the Record window. It may be

shared with the Record Driver for video if the audio and video are

wrapped in the same stream. It takes variable parameters depending on

the driver. The parameters have the same meaning as they do for

playback.

-

DEVICE PATH

Usually a file in the /dev/ directory which controls the

device.

-

BITS

The number of bits of precision Cinelerra should set the device for.

This sometimes has a figuritive meaning. Some sound drivers need to be

set to 32 bits to perform 24 bit recording and won’t record anything

when set to 24 bits. Some sound drivers need to be set to 24 bits for

24 bit recording.

-

SAMPLES TO WRITE AT A TIME

Audio is first read in small fragments from the device. Many small

fragments are combined into a large fragment before writing to disk.

The disk writing process is done in a different thread. The value here

determines how large the combination of fragments is for each disk

write.

-

SAMPLE RATE FOR RECORDING

Regardless of what the project settings are. This is the sample rate

used for recording. This should be the highest the audio device

supports.

4.5.3 FILE FORMAT

This determines the output file format for recordings. It depends

heavily on the type of driver used. The interface is the same as the

rendering interface. The Record audio tracks toggle must be

enabled to record audio. The Record video tracks toggle must be

enabled to record video. The wrench button left of each toggle opens a

configuration dialog to set the codec corresponding to audio and

video. The audio and video is wrapped in a wrapper defined by the

File Format menu. Different wrappers may record audio only, video

only, or both.

Some video drivers can only record to a certain wrapper. DV, for

example, can only record to Quicktime with DV as the video compression.

If the video driver is changed, the file format may be updated to give

the supported output. If you change the file format to an unsupported

format, it may not work with the video driver.

4.6 PERFORMANCE

You’ll spend most of your time configuring this section. The main

focus of performance is rendering parameters not available in the

rendering dialog.

- CACHE ITEMS

To speed up rendering, several assets are kept open simultaneously.

This determines how many are kept open. A number too large may exhaust

your memory pretty fast and result in a crash. A number too small may

result in slow playback as assets need to be reopened more frequently.

-

SECONDS TO PREROLL RENDERS

Some effects need a certain amount of time to settle in. This sets a

number of seconds to render without writing to disk before the selected

region is rendered. When using the renderfarm you’ll sometimes need to

preroll to get seamless transitions between the jobs. Every job in a

renderfarm is prerolled by this value. This does not affect background

rendering, however. Background rendering uses a different preroll

value.

-

FORCE SINGLE PROCESSOR USE

Cinelerra tries to use all processors on the system by default but

sometimes you’ll only want to use one processor, like in a renderfarm

client. This forces only one processor to be used. The operating

system, however, usually uses the second processor anyway for disk

access so this option is really a 1.25 processor mode. The value of

this parameter is used in renderfarm clients.

-

USE OPENGL FOR RENDERING

OpenGL was traditionally used only for playback when it was limited to

16 bit float & very low resolution trig tables. Today, it can be used

for rendering by enabling this option. When combined with the

See COMMAND LINE ENCODER file format, most of the rendering can be accelerated

in the GPU.

4.6.1 BACKGROUND RENDERING

Background rendering was originally conceived to allow HDTV effects to

be displayed in realtime. Background rendering causes temporary output

to constantly be rendered while the timeline is being modified. The

temporary output is played during playack whenever possible. It’s very

useful for transitions and previewing effects which are too slow to

display in a reasonable amount of time. If renderfarm is enabled, the

renderfarm is used for background rendering, giving you the potential

for realtime effects if enough network bandwidth and CPU nodes exist.

The typical way to enable background rendering is to 1st highlight a

region of the timeline to play back, then select Settings->Set

background rendering, then enable background rendering in the

preferences. When finished previewing the section, disable background

rendering in the preferences. If it’s always enabled, playback will

have studdering & flashes as incomplete sections of the rendering are

played with complete sections of rendering.

- FRAMES PER BACKGROUND RENDERING JOB

This only works if renderfarm is being used, otherwise background

rendering creates a single job for the entire timeline. The number of

frames specified here is scaled to the relative CPU speed of rendering

nodes and used in a single renderfarm job. The optimum number is 10 -

30 since network bandwidth is used to initialize each job.

- FRAMES TO PREROLL BACKGROUND

This is the number of frames to render ahead of each background

rendering job. Background rendering is degraded when preroll is used

since the jobs are small. When using background rendering, this number

is ideally 0. Some effects may require 3 frames of preroll.

- OUTPUT FOR BACKGROUND RENDERING

Background rendering generates a sequence of image files in a certain

directory. This parameter determines the filename prefix of the image

files. It should be on a fast disk, accessible to every node in the

renderfarm by the same path. Since hundreds of thousands of image

files are usually created, ls commands won’t work in the

background rendering directory. The  browse button for

this option normally won’t work either, but the

browse button for

this option normally won’t work either, but the  configuration button for this option works.

configuration button for this option works.

- FILE FORMAT

The file format for background rendering has to be a sequence of

images. The format of the image sequence determines the quality and

speed of playback. JPEG is good most of the time.

4.6.2 RENDERFARM

To use the renderfarm set these options. Ignore them for a standalone

system

-

USE RENDER FARM FOR RENDERING

When selected, all the

file->render operations use the renderfarm.

-

NODES

Displays all the nodes on the renderfarm and which ones are active.

Nodes are added by entering the host name of the node, verifying the

value of port and hitting add node.

Computer freaks may be better off editing the

~/.bcast/.Cinelerra_rc file than this if they have hundreds of

nodes. Remember that .Cinelerra_rc is overwritten whenever a copy of

Cinelerra exits.

Select the ON column to activate and deactivate nodes once they

are created.

Nodes may be edited by highlighting a row and hitting apply changes.

-

HOSTNAME

Edit the hostname of an existing node or enter the hostname of a new

node here.

-

PORT

Edit the port of an existing node or enter the port of a new node here.

-

REPLACE NODE

When editing an existing node, hit this to commit the changes to

HOSTNAME and PORT. The changes won’t be committed if you

don’t hit this button.

-

ADD NODE

Create a new node with the HOSTNAME and PORT settings.

-

DELETE NODE

Deletes whatever node is highlighted in the NODES list.

-

SORT NODES

Sorts the NODES list based on the hostname.

-

RESET RATES

This sets the framerate for all the nodes to 0. Frame rates are used

to scale job sizes based on CPU speed of the node. Frame rates are

only calculated when renderfarm is enabled.

-

TOTAL JOBS TO CREATE

Determines the number of jobs to dispatch to the renderfarm. The more

jobs you create, the more finely balanced the renderfarm becomes.

Determine the total jobs to create by multiplying the number of nodes

including the master node by some number. Multiply them by 1 to have

one job dispatched for every node. Multiply them by 3 to have 3 jobs

dispatched for every node. If you have 10 slave nodes and one master

node, specify 33 to have a well balanced renderfarm.

4.7 INTERFACE

These parameters affect purely how the user interface works.

-

INDEX FILES GO HERE

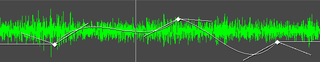

Back in the days when 4 MB/sec was unearthly speed for a hard drive,

index files were introduced to speed up drawing the audio tracks. This

option determines where index files are placed on the hard drive.

-

SIZE OF INDEX FILE

Determines the size of an index file. Larger index sizes allow smaller

files to be drawn faster while slowing down the drawing of large files.

Smaller index sizes allow large files to be drawn faster while slowing

down small files.

-

NUMBER OF INDEX FILES TO KEEP

To keep the index directory from becoming unruly, old index files are

deleted. This determines the maximum number of index files to keep in

the directory.

-

DELETE ALL INDEXES

When you change the index size or you want to clean out excessive index

files, this deletes all the index files.

- TIME STRETCH SCRUBBING

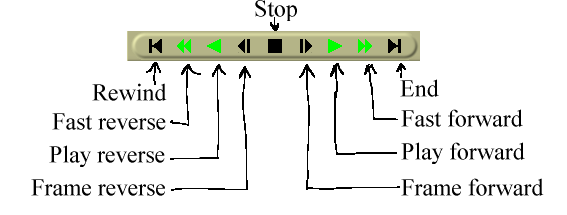

Scrubbing of audio with the transport controls (See USING THE TRANSPORT CONTROLS) can either use tape style pitch stretching or time

stretching with constant pitch. The time stretching mode is more

intelligible but pitch stretching is the traditional sound a tape deck

would have made. Time stretch scrubbing does not have all the options or

use a fast fourier transform like the Time Stretch effect (See TIME STRETCHING AUDIO).

- FRAMES PER FOOT

When the time format is feet-frames, this defines the number of frames

per foot. This mode was intended for correlating the timeline with

positions on physical film. Set the time format by right clicking in

the time bar in the program window See PROGRAM

- MIN DB FOR METER

Some sound sources have a lower noise threshold than others.

Everything below the noise threshold is meaningless. This option sets

the meters to clip below a certain level. Consumer soundcards usually

bottom out at -65. Professional soundcards bottom out at -90.

See SOUND LEVEL METERS.

- MAX DB FOR METER

This sets the maximum sound level represented by the sound meters. No

matter what this value is, no soundcard can play sound over 0 db. This

value is presented merely to show how far over the limit a sound wave

is.

See SOUND LEVEL METERS.

- THEME

Cinelerra supports variable themes. Select one here and restart

Cinelerra to see it.

- OVERRIDE DPI

The theme tries to scale itself based on the DPI reported by the X

server. This doesn’t always work, so there is an option to override it

with a user defined DPI.

4.8 ABOUT

This section gives you information about the copyright, the time of the

current build, the lack of a warranty, and the versions of some of the

libraries. Be sure to agree to the terms of the lack of the warranty.

5 CREATING A NEW PROJECT

There are 2 ways to create a new project: going to File->New or

loading new files See LOADING FILES. Once a new project is

created, all the parameters can be changed later without creating a new

project.

5.1 USING FILE->NEW

One way is to go to File->New. This merely clears the EDL & creates

a blank timeline with the project settings previously defined in

settings->format. There was originally a dialiog for new, but it

was never used. The number of tracks created depends on the last file

loaded. It’s proven easiest to create the needed tracks with the Audio

& Video menus.

5.2 CHANGING PARAMETERS AFTER LOADING

After a project is created, you can use the set format dialog to change

parameters without deleting the project. Go to Settings->Set

format. The sections of the dialog are described here:

-

Presets - Select an option from this menu to have all the project

settings set to one of the known standards.

- Audio -> Tracks - Sets the number of audio tracks the new project

should have. Tracks can be added or deleted later, but options are

provided here for convenience.

- Audio -> Channels - Sets the number of audio channels the new

project should have. The number of audio channels doesn’t have to be

the same as the number of tracks.

- Audio -> Samplerate - Sets the samplerate of the audio. The

project samplerate doesn’t have to be the same as the media sample rate

that you load. Media is resampled to match the project sample rate.

- Video -> Tracks - Sets the number of video tracks the new project

should have. Tracks can be added or deleted later, but options are

provided here for convenience.

- Video -> Framerate - Sets the framerate of the video. The project

framerate doesn’t have to be the same as the media frame rate that you

load. Media is reframed to match the project framerate.

- Video -> Canvas size - Sets the size of the video output. Each

track also has its own frame size. Initially the New dialog

creates video tracks whose sizes all match the video output, but the

video track sizes can be changed later without changing the video

output.

- Video -> Aspect ratio - Sets the aspect ratio. The aspect ratio is

applied to the video output. The aspect ratio can be different than

the number of horizontal pixels / the number of vertical pixels.

Setting a different aspect ratio than the number of pixels results in

nonsquare pixels.

- Video -> Auto aspect ratio - If this is checked, the New dialog

always recalculates the Aspect ratio setting when the Canvas

size is changed. This ensures pixels are always square.

- Video -> Color model - This sets the color model video intermediates

in the project will be stored in. Color models are described in a

separate section See COLOR MODEL.

More about this section is discussed in See SETTING PROJECT ATTRIBUTES.

6 THE MAIN WINDOWS

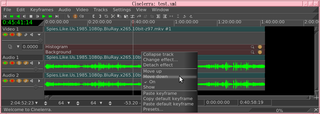

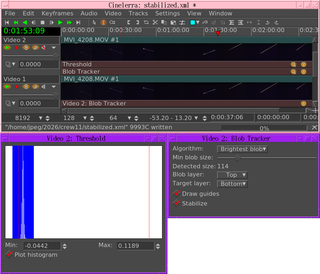

When Cinelerra first starts, you’ll get four main windows. Hitting

CTRL-w in any window closes it.

6.1 VIEWER

In here you’ll scrub around source media and clips, selecting regions

to paste into the project. Operations done in the viewer affect a

temporary EDL or a clip but not the timeline.

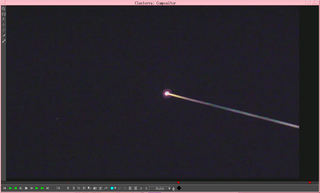

6.2 COMPOSITOR

This window displays the output of the timeline. It’s the interface

for most compositing operations or operations that affect the

appearance of the timeline output. Operations done in the Compositor

affect the timeline but don’t affect clips.

The video output has several navigation functions.

The video output size is either locked to the window size or unlocked

with scrollbars for navigation. The video output can be zoomed in and

out and panned. Navigating the video output this way doesn’t affect the

rendered output, it just changes the point of view in the compositor

window.

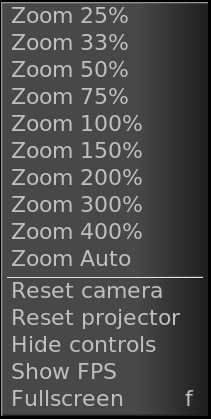

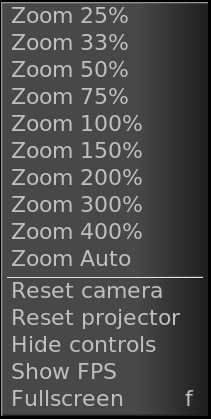

VIDEO CONTEXT MENU

Right clicking anywhere in the video output brings up a menu with all

the zoom levels and some other options. Selecting a percentage here

resizes the window. If the video size is locked to the window size, the

video changes size. If the video size is unlocked from the window size,

the video stays the same size.

Selecting auto locks the video to the window size.

The reset camera and reset projector options center the camera

and projector See COMPOSITING.

The Hide controls option hides everything except the video.

If video is unlocked from the window size, middle clicking and dragging

anywhere in the video pans the point of view.

Hitting the + and - keys unlocks the video size from the window size &

zooms in and out of the video output.

Underneath the video output are copies of many of the functions

available in the main window. In addition there is a

zoom menu and a

zoom menu and a  tally light.

tally light.

Selecting a percentage in the zoom menu unlocks the video size from the

window size. Selecting Auto locks the video size to the window

size.

The zoom menu does not affect the window size.

The tally light turns red when rendering is happening. This is useful

for knowing if the output is current.

On the left of the video output is a toolbar specific to the compositor

window. Select a tool to enable it. Select it again to disable it. To

protect the video from changes, be sure all the tools are deselected.

Here are the functions in the toolbar:

6.2.1 MAGNIFYING GLASS

This zooms in and out of the compositor output without resizing the

window. If the video output is currently locked to the size of the

window, clicking in it with the magnifying glass unlocks it and

creates scrollbars for navigation.

Left clicking in the video zooms in.

Ctrl clicking in the video zooms out.

Rotating the wheel on a wheel mouse zooms in and out.

6.2.2 MASKS TOOL

This brings up the mask editing tool See MASKS.

6.2.3 RULER

The ruler shows pixel coordinates for the cursor & a line. Click drag

in the video output to draw a line to measure. Click without dragging

to hide the ruler line.

Always draw it Causes the ruler line to be drawn in all the other

tools. This allows the ruler to be used to align camera & projector

movements.

6.2.4 CAMERA

This brings up the camera editing tool See THE CAMERA AND PROJECTOR.

6.2.5 PROJECTOR

This brings up the projector editing tool See THE CAMERA AND PROJECTOR.

6.2.6 CROP TOOL

This brings up the cropping tool See CROPPING.

6.2.7 EYEDROPPER

This brings up the eyedropper. The eyedropper detects whatever color

is under it and stores it.

Click anywhere in the video output to select the color at that point.

radius Determines the size of the area that the eyedropper scans.

The average of the area is used.

use maximum Uses the maximum values from the scanned area instead of

the average.

The eyedropper value can be applied to many effects. Different effects

handle the eyedropper differently. They usually have an option called

use color picker to use the last scanned eyedropper color.

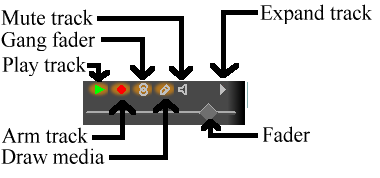

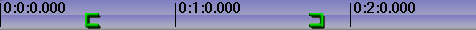

6.3 PROGRAM

This contains the timeline and the entry point for all menu driven

operations. The timeline consists of a vertical stack of tracks with

horizontal representation of time. This defines the output of rendering

operations and what is saved when you save files. Left of the tracks is

the patchbay which contains options affecting each track.

Above the tracks is the time bar. Right click in the time bar to select

a time format. The time format is used by all the clocks.

Under the Window menu you’ll find options affecting the main

windows. default positions repositions all the windows to a 4

screen editing configuration. On dual headed displays, the

default positions operation fills only one monitor with windows.

6.4 RESOURCES

Effects, transitions, clips, and assets are accessed here. Most of the

resources are inserted into the project by dragging them out of the

resource window. Management of resource allocation is also performed

here.

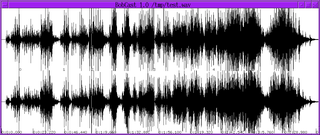

6.5 SOUND LEVEL METERS

An additional window, the levels window can be brought up from

the Window menu. The levels window displays the output

audio levels after all mixing is done.

Sound level meters appear in many locations. They can be toggled in

the viewer and compositor windows with the  level

toggle. They appear in the patchbay when a track is expanded (See THE PATCHBAY.) They appear in the recording monitor when audio is being

recorded.

level

toggle. They appear in the patchbay when a track is expanded (See THE PATCHBAY.) They appear in the recording monitor when audio is being

recorded.

The sound levels in the levels window, compositor, and viewer

correspond to the final output levels before they are clipped to the

soundcard range. In the record monitor they are the input values

from the sound card. In the patchbay they are the sound levels for

each track after all effects are processed and before downmixing for

the output.

Most of the time, audio levels have numerical markings in DB but in the

patchbay there isn’t enough room.

The sound level is color coded as an extra means of determining the

sound level. Even without numerical markings, the sound level color

can distinguish between several ranges and overload. Look at the color

codings in a meter with numerical markings to see what colors

correspond to what sound level. Then for meters in the patchbay in

expanded audio tracks, use the color codings to see if it’s overloading.

Be aware that sound levels in Cinelerra can go above 0DB. This allows

not only seeing if a track is overloading but how much information is

being lost by the overloading. Overloading by less than 3DB is usually

acceptable. While overloading is treated as positive numbers in

Cinelerra, it is clipped to 0 when sent to a sound card or file.

The visible range of the sound level meters is configurable in

settings->preferences->interface (See INTERFACE.)

6.6 OTHER WINDOWS

The Overlays window can be brought up from the Window

menu. This is a quick way to toggle what is drawn in the timeline.

Every option in the View menu is available here.

7 LOADING AND SAVING FILES

7.1 SUPPORTED MEDIA FORMATS

Cinelerra reads most of the file formats found on the internet, with

limited support for pro camera output. You may be able to load other

formats not described here. Most of the decoding is done through

ffmpeg, which entails writing a new API for every new codec. It tends

to go a few years between codec updates so a lot of new codecs can be

combined in a single API rewrite.

Most of the video rendering is now done through ffmpeg, using the

Command Line file format. A few formats like Quicktime/MP4 & audio

formats still come out better when they’re sent through their dedicated

libraries.

Whether it’s a media format or an edit decision list affects what

Cinelerra does with it. Edit decision lists replace the project

settings. Formats which contain media but no edit decisions just add

data to the tracks unless the Conform Project option is enabled in

the load dialog.

If your project sample rate is 48khz and you load a sound file with

96khz, you’ll still be playing it at 48khz. If you load an EDL file at

96khz and the current project sample rate is 48khz, you’ll change it to

96khz.

Some file formats are very slow to display on the timeline. These

usually have video which is highly compressed. Disable picon drawing

for these files with the draw media toggle to speed up operations.

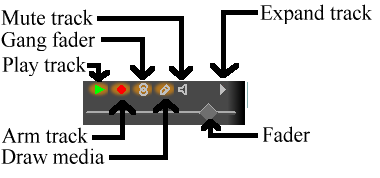

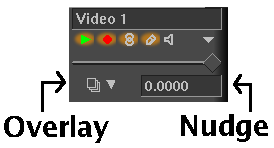

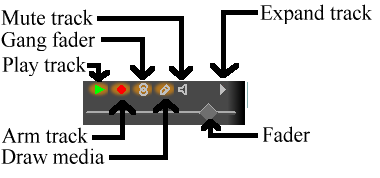

Track attributes

Supported file formats are currently:

- WAV

- FLAC

- PCM

- AIFF

- AC3 audio

7.1.1 COMMAND LINE ENCODER

This writes the output through a pipe to a command line program, usually

ffmpeg but it can also be /dev/null or netcat. Audio & video are

supported. Different command lines can be used for encoding audio &

video, then multiplexing them into a single file. There’s a GUI for

presets & storing different command lines.

Someone could conceivably read video from Live video & write it to

netcat to use the render operation as a live video processor.

The main use of command line encoding is GPU encoding through ffmpeg. A

GPU encoding ffmpeg is not officially included in the Cinelerra

distribution because of licensing restrictions, although there may or

may not be an unofficial copy.

It officially has to be compiled from scratch & it’s very specific to

the hardware. For nvidia, it needs nvenc support. There is a buried

document showing how to build ffmpeg with hardware support on

https://docs.nvidia.com/video-technologies/video-codec-sdk/ffmpeg-with-nvidia-gpu/

We can build an ffmpeg to show you what works. In the ffmpeg directory,

run

./configure –enable-pthreads –disable-ffplay –enable-nonfree –enable-gpl –enable-cuda-nvcc –enable-libnpp –nvccflags=-I/usr/local/cuda/include –extra-cflags=-I/usr/local/cuda/include –extra-ldflags=-L/usr/local/cuda/lib64

This also requires a working CUDA installation. Then run make.

The main encoding we’re interested in accelerating is video, so enable

RENDER VIDEO TRACKS. Then go to the wrench icon. It has a number of

presets. Highlight a preset & hit load to make it use the preset. If

you make changes to the preset, hit save to overwrite it, otherwise the

change will be applied to the current rendering job but not saved as a

preset.

If you want to create a new preset, change the title before

hitting save. If you screw up a preset that came with the program,

delete the preset & restart the program. It’ll always recreate the

default presets.

Ffmpeg HEVC VBR is a variable bitrate encoder for H.265. HEVC CBR is a

constant bitrate encoder for H.265. In the command lines are the ffmpeg

options for a fixed quantization factor or a fixed bitrate.

Finally, there is the ever important null encoder which just throws away

the output. This is useful for benchmarking rendering.

The command line takes some formatting codes for the frame rate, frame

size, & filename.

An important option is the color model. It most often should be YUV 420

planar.

The audio & video encoder have similar windows. The audio encoding

takes a sample format instead of a color model. A very common error

with ffmpeg is the dreaded "input contains (near) nan/+-inf" If you get

this error, it means the audio encoder expects the samples in 1 format

but Cinelerra is outputting another format. It usually happens when

trying to enclode floating point. A safe setting is 24 bit linear,

signed, non dithered, lo hi byte order.

The wrapper runs after the audio & video encoding is finished. An

important option in the wrapper is deleting the temporaries. If the

wrapper doesn’t work, you may not want to reencode everything to try

again. In this case, you don’t want to delete the temporaries but want

to run the wrapper on the command line a 2nd time.

7.1.2 QUICKTIME

Quicktime was not originally the standard for UNIX but we used it

because it was well documented. It later became MP4. All of the

Quicktime movies on the internet are compressed. Cinelerra doesn’t

support most compressed Quicktime movies but does support some. If it

crashes when loading a Quicktime movie, that means the format probably

wasn’t supported.

NOTES ON QUICKTIME ENCODING

Here are some notes regarding making Quicktime movies in Cinelerra:

Quicktime is a wrapper for 2 codecs, a video codec and an audio codec.

The video and audio codecs are picked separately. The preferred

encoding for Quicktime output is MPEG-4 Video and MPEG-4 Audio. This

format plays in the commercial players for Windows and has good

compression quality. For better compression, use H-264 Video.

Unfortunately H-264 decoding is so slow it can’t play very large frame

sizes.

Cinelerra supports 2 nonstandard codecs: Dual MPEG-4 video and dual

H.264 video. These won’t play in anything but Cinelerra and XMovie.

They are designed for movies where the frames have been divided into 2

fields, each field displayed sequentially. The dual codecs interleave

2 video streams to improve efficiency without requiring major changes

to the player.

7.1.3 MPEG-4 AUDIO

This is the same as Quicktime with MPEG-4 Audio as the audio codec.

7.1.4 IMAGE SEQUENCES

Rendering an image sequence is not the same as rendering a single

image. When rendering an image sequence Cinelerra generates a table of

contents file for the image sequence and makes a different image file

for every timeline position. The table of contents can be loaded

instead of the individual images to get better performance. To learn

more about the different image formats supported in an image sequence,

read about still images.

7.1.5 STILL IMAGES

Rendering a single image causes the image file to be overwritten for

every timeline position. No table of contents is created. When

loaded, the image takes up one frame in length and doesn’t change the

project attributes.

Several still image formats not normally found in other programs are

described here.

7.1.5.1 OPEN EXR IMAGES

You may not know about Open EXR. This format stores floating point RGB

images. It also supports a small amount of compression. Projects

which render to EXR should be in a floating point color model to take

advantage of it See SETTING PROJECT ATTRIBUTES. Several compression

options are available for EXR.

-

PIZ Lossless wavelet compression. This is the best compression.

- ZIP Lossless gzip algorithm.

- RLE Lossless run length encoding. This is the fastest and worst

compression.

- PXR24 Lossy compression where the floating point numbers are

converted to 24 bits and compressed with gzip.

Select Use Alpha if the project colormodel has an alpha channel and

you want to retain it in the file. Otherwise the primary colors are

multiplied by the alpha channel.

7.1.5.2 RAW DIGITAL CAMERA IMAGES

RAW digital camera images are a special kind of image file which

Cinelerra only imports. These must be processed in a floating point

color space once they are on the timeline. Raw images from Canon

cameras are the only ones tested. They need to have the Linearize

effect applied to correct gamma. Because raw images take a long time

to interpolate, they are usually viewed first in a proxy file and then

touched up.

First apply the Linearize effect to a track of raw images and set it to

automatic with 0.6 gamma. Then render the timeline to a

Quicktime JPEG file. Append the Quicktime JPEG file in a new track and

disable playback of the old track. Now the gamma corrected copy of

each raw image can be previewed relatively fast in the same timeline

position as the original image.

7.1.6 AVI

AVI with assorted audio and video codecs. Because AVI is so

fragmented, your luck will vary.

7.1.7 MPEG FILES CONTAINING VIDEO

MPEG files containing video can be loaded directly into Cinelerra. If

the file is supported, a table of contents is built. If the file is

unsupported, it usually crashes or shows very short tracks.

Unfortunately, this method of loading MPEG files isn’t good enough if

you intend to use the files in a renderfarm.

To use MPEG files in a renderfarm you need to run mpeg3toc to

generate a table of contents for the file, then load the table of

contents. Mpeg3toc needs the absolute path of the MPEG file. If you

don’t use an absolute path, it assumes the MPEG file is in the same

directory that Cinelerra is run from.

MPEG streams are structured into multiple tracks. Each track can be

video or audio. Each audio track can have 1-6 channels. Cinelerra

converts each channel of audio into a track.

NOTES ON MPEG VIDEO ENCODING

MPEG video encoding is done separately from MPEG audio encoding. In

MPEG video there are 2 colormodels. The YUV 4:2:0 colormodel is

encoded by a highly optimized version of mpeg2enc with presets for

standard consumer electronics. In the process of optimizing mpeg2enc,

they got rid of YUV 4:2:2 encoding. The YUV 4:2:2 colormodel is

encoded by a less optimized version of mpeg2enc.

YUV 4:2:2 encoding was kept around because the NTSC version of DV video

loses too much quality when transferred to YUV 4:2:0. This DV video

must be transferred to YUV 4:2:2.

When encoding YUV 4:2:0, the bitrate parameter changes meaning

depending on whether the bitrate or quantization is fixed. If the

bitrate is fixed, it’s the target bitrate. If the quantization is

fixed, it’s the maximum bitrate allowed. This is a quirk of the

mpeg2enc version.

7.1.8 DVD MOVIES

DVD’s are spit into a number of programs, each identified by a unique

IFO file. If you want to load a DVD, find the corresponding

IFO file for the program of interest. Load the IFO file directly

and a table of contents will be built. Alternatively for renderfarm

usage, a table of contents can be created separately.

Run

mpeg3toc -v /cdrom/video_ts/vts_01_0.ifo dvd.toc

or something similar. Then load dvd.toc.

7.1.9 MPEG 1 AUDIO

These are .mp2 and .mp3 files. If fixed bitrate, they can be loaded

directly with no table of contents. Variable bitrate streams need to

have a table of contents created with mpeg3toc.

7.1.10 OGG THEORA/VORBIS

The OGG format is an antiquated but supposedly unpatented way of

compressing audio and video. The quality isn’t as good as H.264 or

MPEG-4 Audio. In reality, anyone with enough money and desire can find

a patent violation so the justification for OGG is questionable.

7.1.11 EDIT DECISION LIST

Edit decision lists are generated by Cinelerra for storing projects.

They end in .xml. They change project attributes when loaded.

Because edit decision lists consist of text, they can be edited in a

text editor.

7.2 LOADING FILES

All data that you work with in Cinelerra is acquired either by

recording from a device or by loading from disk. This

section describes loading.

The loading and playing of files is just as you would expect. Just go

to file->Load, select a file for loading, and hit ok. Hit

the forward play button and it should start playing, regardless of

whether a progress bar has popped up.

Another way to load files is to pass the filenames as arguments on the

command line. This creates new tracks for every file and starts the

program with all the arguments loaded.

If the file is a still image, the project’s attributes are not changed

and the first frame of the track becomes the image. If the file has

audio, Cinelerra may build an index file for it to speed up drawing.

You can edit and play the file while the index file is being built.

7.2.1 LOAD MODE

Usually three things happen when you load a file. First the existing

project is cleared from the screen, second the project’s attributes are

changed to match the file’s, and finally the new file’s tracks are

created in the timeline.

But Cinelerra lets you change what happens when you load a file.

In the file selection box there is a range of options for load

mode. Each of these options loads the file a different way. The load

mode is a recurring theme in many of Cinelerra’s functions. A lot of

steps that would normally entail editing operations on the timeline can

be automated by setting the right load mode.

If you load files by passing command line arguments to Cinelerra, the

files are always loaded with the Replace current project mode.

- Replace current project

All tracks in the current project are deleted and new tracks are

created to match the source. Project attributes are only changed when

loading XML. If multiple files are selected it adds new tracks for

every file.

- Replace current project and concatenate tracks

Same as replace current project except if multiple files are selected

it concatenates the tracks of every file after the first.

- Append in new tracks

The current project is not deleted and new tracks are created for the

source.

- Concatenate to existing tracks

The current project is not deleted and new files are concatenated to

the existing tracks.

- Paste at insertion point

The file is pasted in like a normal paste operation.

- Create new resources only

The timeline is unchanged and new resources are created in the Resource

Window.

- Nested EDL

If the file is an EDL, the output of the EDL is pasted in like a media

file. Nested EDLs have 1 video track & a number of audio tracks

corresponding to the number of output channels. They allow larger

sequences composed of many smaller sequences, transitions to be applied

to the output of another EDL, & global processing on the output of an

EDL without having to manipulate each track.

7.2.2 CONFORM PROJECT

This causes the project settings to be changed to match the 1st loaded

media file. Video size, frame rate, sample rate, but not the number of

channels are changed. This only happens in the load modes which replace

the current project.

7.2.3 LOADING MULTIPLE FILES

In the file selection box go to the list of files. Select a file. Go

to another file and select it while holding down CTRL. This

selects one additional file. Go to another file and select it while

holding down SHIFT. This selects every intervening file. This

behavior is available in most every list box.

Select a bunch of mp3 files and Replace current project and

concatenate tracks in the load mode to create a song playlist.

7.2.4 FILE PREVIEWS

File previews are shown only if the show preview  toggle is enabled. Highlighting a file causes it to try to load a

preview. There are certain rules for which files it can preview. There

are limits to the frame size, file size of an image file. Certain EDL’s

can be previewed, usually limited to a single video track with no

effects & only 1 asset. The reason an EDL can’t be previewed will

usually be printed on the command line.

toggle is enabled. Highlighting a file causes it to try to load a

preview. There are certain rules for which files it can preview. There

are limits to the frame size, file size of an image file. Certain EDL’s

can be previewed, usually limited to a single video track with no

effects & only 1 asset. The reason an EDL can’t be previewed will

usually be printed on the command line.

7.3 LOADING THE BACKUP

There is one special XML file on disk at all times. After every

editing operation Cinelerra saves the current project to a backup in

$HOME/.bcast/backup.xml. In the event of a crash go to

file->load backup to load the backup. It is important after a

crash to reboot Cinelerra without performing any editing operations.

Loading the backup should be the first operation or you’ll overwrite

the backup.

7.4 SAVING FILES

When Cinelerra saves a file it saves an edit decision list of the

current project but doesn’t save any media. Go to File->save

as.... Select a file to overwrite or enter a new file. Cinelerra

automatically concatenates .xml to the filename if no

.xml extension is given.

The saved file contains all the project settings and locations of every

edit but instead of media it contains pointers to the original media

files on disk.

For each media file the XML file stores either an absolute path or just

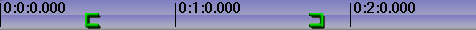

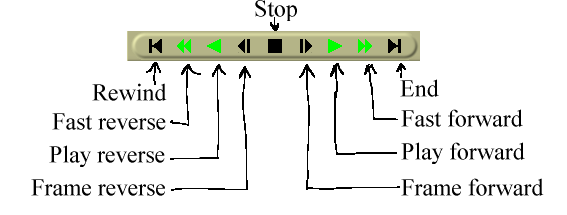

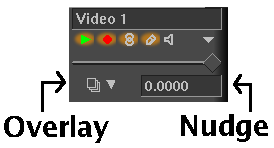

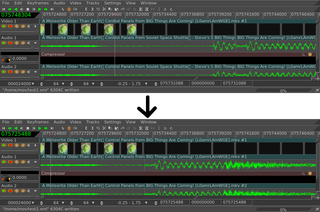

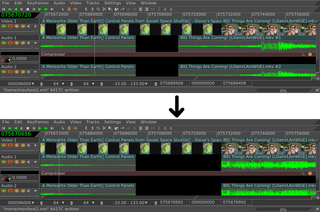

the relative path. If the media is in the same directory as the XML

file a relative path is saved. If it’s in a different directory an

absolute path is saved.

In order to move XML files around without breaking the media linkages

you either need to keep the media in the same directory as XML file

forever or save the XML file in a different directory than the media

and not move the media ever again.

If you want to create an audio playlist and burn it on CD-ROM, save the

XML file in the same directory as the audio files and burn the entire

directory. This keeps the media paths relative.

XML files are useful for saving the current state before going to sleep

and saving audio playlists but they’re limited in that they’re specific

to Cinelerra. You can’t play XML files in a dedicated movie player.

Realtime effects in an XML file have to be resynthesized every time you

play it back. The XML file also requires you to maintain copies of all

the source assets on hard drives, which can take up space and cost a

lot of electricity to spin. For a more persistent storage of the

output there’s rendering.

7.5 RENDERING FILES

Rendering takes a section of the timeline, performs all the editing,

effects and compositing, and stores it in a pure movie file. You can

then delete all the source assets, play the rendered file in a movie

player, or bring it back into Cinelerra for more editing. It’s very

difficult to retouch any editing decisions in the pure movie file,

however, so keep the original assets and XML file around several days

after you render it.

All rendering operations are based on a region of the timeline to be

rendered. You need to define this region on the timeline. The

navigation section describes methods of defining regions.

See NAVIGATING THE PROJECT. The rendering functions define the

region based on a set of rules. When a region is highlighted or in/out

points are set, the affected region is rendered. When no region is

highlighted, everything after the insertion point is rendered. Merely

by positioning the insertion point at the beginning of a track and

unsetting all in/out points, the entire track is rendered.

7.5.1 SINGLE FILE RENDERING

The fastest way to get media to disk is to use the single file

rendering function.

Go to File->render to bring up the render dialog. Select the

magnifying glass  to bring up a file selection dialog. This determines

the filename to write the rendered file to and the encoding parameters.

to bring up a file selection dialog. This determines

the filename to write the rendered file to and the encoding parameters.

In the render dialog select a format from the File Format menu.

The format of the file determines whether you can render audio or video

or both. Select the Render audio tracks toggle to generate

audio tracks and Render video tracks to generate video tracks.

Select the wrench  next to each toggle to set compression

parameters. If the file format can’t store audio or video the

compression parameters will be blank. If Render audio tracks or

Render video tracks is selected and the file format doesn’t

support it, trying to render will pop up an error.

next to each toggle to set compression

parameters. If the file format can’t store audio or video the

compression parameters will be blank. If Render audio tracks or

Render video tracks is selected and the file format doesn’t

support it, trying to render will pop up an error.

The Create new file at each label option causes a new file to be

created when every label in the timeline is encountered. This is

useful for dividing long audio recordings into individual tracks. When

using the renderfarm, Create new file at each label causes one

renderfarm job to be created at every label instead of using the

internal load balancing algorithm to space jobs.

When Create new file at each label is selected, a new filename

is created for every output file. If the filename given in the render

dialog has a 2 digit number in it, the 2 digit number is overwritten

with a different incremental number for every output file. If no 2

digit number is given, Cinelerra automatically concatenates a number to

the end of the given filename for every output file.

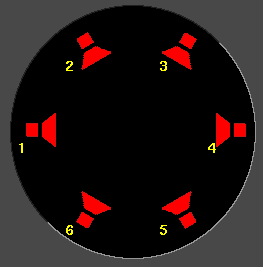

In the filename /hmov/track01.wav the 01 would be